Abstract

Video ads are increasingly popular in digital marketing, but advertisers are unsure about how much they improve performance over static ads and which consumer response, such as unmuting or watching through the end, matters most. Using data from the online retail site Amazon.com, we apply causal inference methods to both a monthlong and yearlong time horizon and find support for our hypotheses. First, brands that invested in Sponsored Brands video (SBv) ads in addition to sponsored ads static ads had a 25% higher click-through rate (CTR) and 10% higher year-over-year sales growth. Second, individual consumer CTR depends on ad format (video vs. static), unmuting, and time watched. For audiences in 15 countries across North America, Europe, the Middle East, Asia, and Australia, we find a 17.7 times higher CTR on SBv versus static images, especially for unmuted versus muted SBv. Furthermore, the muted consumer CTR increases with the viewed video length, with a substantial increase at a viewed video length longer than 5 s. Surprisingly, the unmuted CTR remains over 3 times that of muted CTR at all viewed video lengths, showing only a CTR uptick when the video was completed. Thus, if the ad is not watched with sound for its full length (the best-case scenario), advertisers should strive for video ads that (1) are unmuted, even for a short time, or (2) play at least 5 s on mute.

Similar content being viewed by others

Video is like the Kobe beef of programmatic advertising (McBee 2015).

Introduction

Both managers and academics maintain that video advertising is more effective than static imagery. However, video ads are also three to four times more expensive than static video ads (McBee 2015), and how much they causally add to click-through rates (CTRs) and sales growth is unclear. Indeed, the greater effectiveness of video is mostly anecdotal or based on surveys and correlational analysis. According to HubSpot, 90% of responders report that product videos are helpful in the decision-making process (Digital Marketing Trends 2017). Semerádová and Weinlich (2020) show that video ads have an average CTR of 1.84%, which is the highest of all digital ad formats, and that brands that use video ads grow 49% faster than brands that do not use video. However, their results are correlational and do not involve a causal analysis, as used in our study, nor do the authors compare short-term with long-term results. Moreover, video ads provide more metrics than static imagery, such as whether the consumers watched it muted or unmuted (with sound on) and for how long (McBee 2015). How can managers leverage these metrics to understand how viewed length matters depending on whether the video is muted or not?

We took a conscious decision to approach this study by employing three distinct methodologies to test three distinct hypotheses. The first being, do SBv video ads lead to any short-term impact? Do SBv video ads have any long-term impact? Do unmuted SBv ads drive higher clicks than static imagery ads? Using a combination of different causal inference methodologies, we hope to holistically prove the proposition that SBv offers in driving incremental ad performance, especially when videos are unmuted, i.e., play with sound on.

SBv and other video ads, such as Streaming TV and Amazon DSP video or Online Video, may have long- and short-term effects, improving awareness, consideration, clicks, and sales (e.g., Giordano et al. 2015). We chose to examine SBv because brands of all sizes use this format on Amazon to reach more than 300 M consumers worldwide, with monthly sample sizes ranging from 158 to 328 M (see Appendix 1). Moreover, we analyze the impact of SBv on two key performance indicators used by advertisers and also in previous research (Shah 2021; Pauwels and Shah 2022): CTR and sales growth. Our study stands apart from others by investigating different time horizons with different causal methods and by offering actionable insights into specific video features.

Causal modeling of the SBv impact proceeds in two analyses with different time horizons. The first, shorter-term horizon, is one month, a typical look-back window in digital advertising. We use a two-stage Gaussian process—our version of causal multi-task Gaussian processes (Alaa and Van der Schaar 2018; Chernozhukov et al. 2018)—to evaluate the impact of SBv adoption on sales growth, with a matched sample of 25,364 advertisers in North America and Europe. We find that brands that launched an SBv campaign for the first time obtained an average 21.3% increase in sales the following month compared with those that did not. The second, longer-term horizon, is one year, and we applied propensity score stratification (Austin 2011) to compare sales growth and CTR. These analyses show that adding SBv to the ad mix or increasing SBv share of ad spend increased sales in the short run and both sales and CTR in the long run. We find that brands that used SBv obtained 1.25 times higher CTR and 1.1 times higher year-over-year (YoY) sales growth (2020 vs. 2019) than brands that used only sponsored ads.

Digging deeper into the video features, we perform an individual consumer-level analysis across 15 countries in North America, Europe, the Middle East, Asia, and Australia over one year (May 2021–April 2022), with a 158 M sample size. We find a 17.7 times higher CTR on SBv versus static images, and we verify these results in out-of-sample testing in the May 2022–October 2022 6-month holdout. In addition, we show that consumers who unmuted the video ad (turned sound on) clicked 3.1 times more than consumers who kept their videos muted (sound off). Furthermore, muted consumer CTRs increased with the viewed video length, with a substantial increase at a video length greater than 5 s. Surprisingly, the unmuted CTR remained over 3 times that of muted CTR at all viewed video lengths,Footnote 1 showing only a CTR uptick when the video was completed. Thus, advertisers should feature video ads that play with sound for the full length (the best-case scenario), or they should strive for video ads that (1) are unmuted, even for a short time, or (2) play at least 5 s on mute. Finally, we find no evidence of “consumer fatigue” (i.e., consumers clicking through at a lower rate when they watch more video ads) at any level of exposure to unmuted videos, as the unmuted CTR remained 2 times as high as muted CTR, even for consumers exposed to more than 90% of unmuted (vs. muted) videos.

This study makes both methodological and substantive contributions. To the best of our knowledge, we are the first to combine Gaussian processes for short-term impact with propensity score stratification for long-term impact. For managers, we quantify the short- and long-term benefits of video versus static ads on both CTR and sales growth. Moreover, we provide tactical insights into the importance of viewed length combined with sound on (unmuted) versus off (muted).

Research background

This study analyzes the causal effect of video versus static ads in the context of Sponsored Brands video (SBv), a relatively new mid-funnel Amazon Ads product, as shown in Fig. 1 (Amazon 2021). Our focus is on the brand-level impact of and consumer reaction to SBv, an outstream video ad that places “compelling advertising content outside of the traditional video stream, such as within a text article, newsfeed, or slideshow” (Teads 2015).

Our study touches on three research streams: consumer response to video vs static images, advertising’s impact on consumer interest and purchase, and the features of digital video advertising. We discuss these literatures in this order.

First, video has been shown to draw substantially more attention than static images, both in terms of viewing length and frequency (Chattington et al. 2009; Decker et al 2015). According to Media Richness theory (MRT), different media have different degrees of richness, i.e., power to reproduce the information that media transmits (Daft and Lengel 1986). Video includes moving images and sound, and is generally processed and recalled better than text or audio (Schnotz and Bannert 2003; Shorter and Dean 1994). Because video is continuous, it gives more information than even a series of static pictures could (Tversky et al. 2002; Betrancourt and Tversky 2000). Moreover, based on MRT, we expect that videos played with sound on (thus engaging both the eyes and the ears) are more effective in influencing consumers than those with sound off.

However, empirical studies on the effectiveness of video versus static images show mixed results. On one hand, video creation companies claim that video ads help increase traffic and dwell time because they attract more attention, and allow brands to educate the consumer Grgurovic (2022). Facebook and Instagram report that video ads get respectively 30% more reach and 3 times as much engagement as image ads (ibid). Moreover, Semerádová and Weinlich (2020) show that video ads enjoy the highest click-through, and early Internet studies show website with richer media like video/audio are rated better than websites with only text/pictures due to their vividness (Appiah 2006). On the other hand, several studies find that video is not more effective, and sometimes even less effective than static images in ads (Dardis et al. 2016) and in learning environments (Hegarty et al. 2003; Mayer et al. 2005; Schnotz et al 1999; Tversky et al 2002). A key reason may be that video is unnecessary and overloads the audience’s cognition (Sweller 2005), as it is faster to process an image (Grugrovic 2022). Moreover, videos are more expensive to produce than static images (ibid). Therefore, the research question of whether and how much video ads are more effective than static image ads is both unanswered and managerially important.

Second, advertising effectiveness is measured both in purchase outcomes as in consumer mindset metrics such as brand consideration (Roberts and Lattin 1997; Srinivasan et al. 2010; Pauwels et al. 2013). In online settings, such consideration can be measured by a consumer clicking through on an ad, which also correlated strongly with the price brands pay for the ad. As the desired ‘mid-funnel’ outcome, consideration is often addressed through mid-funnel ad actions (Batra and Keller 2016), such as Sponsored Brands on Amazon.com (Qin and Pauwels 2023). Recent research in online marketing has established that both video and static display mid-funnel ads increase brand consideration (Brentlinger 2020) and that brands using mid-funnel ad tactics achieve better outcomes (Bagadia and Quint 2021). Bagadia and Quint (2021) show that brands could attribute 16 times more sales to the previously underused channels in the mid-funnel.

Regarding viewed video length, Becker et al. (2022) argue that video ad content can have an impact on the number of consumers skipping the ad (0 s watching ads or “zapping”). Moreover, specific ad content can affect ad skipping after the initial preview (Belanche et al. 2017; Campbell et al. 2017; McGranaghan et al. 2022). Becker et al. (2022) find that professional video ad content techniques can reduce the number of consumers skipping the video ad and thus increase the number of consumers watching the video ad longer. Furthermore, Shehu et al. (2016) show that high likability at the beginning and the end of a video ad is the most important. However, they do not investigate how this may differ for muted versus unmuted videos. Video sound is an important and often overlooked feature. For example, Rogers and Weber (2019) report that unmuted audio (music in their case) affected the experience of video game players, albeit in a survey with a relatively small sample.

While this recent literature addresses several video-related advertiser issues, it does not address our two research questions (RQ):

- RQ1:

-

What is the causal CTR and sales impact of launching a video campaign?

- RQ2:

-

How much do viewing time, sound-on, and share of sound-on videos affect CTR?

To answer these questions, we carry out both a causal analysis (RQ1) and an exploratory study of the CTR impact of video ads’ viewing characteristics (RQ2) for the specific Amazon Ads product of SBv. Our hypotheses are straightforward: we expect video versus static ads to result in higher CTRs and sales growth, and this benefit should be more pronounced when consumers watch the video for a longer time and with sound. We have no strong reason to suspect consumer fatigue and investigate all effect sizes in an exploratory manner. This approach is typical in marketing analytics, which has evolved to best understand and quantify patterns in big data (Iacobucci et al. 2019).

Methodology

While we could test RQ1 with experiments or causal inference based on observational data, given the prohibitive costs of experiments at scale, we chose the latter option. Specifically, we analyze the actual ads of tens of thousands of advertisers (high sample size and external validity) while addressing internal validity concerns by comparing each video advertiser with its statistical “twin” in our data. The exact identification of these advertiser twins differs for the short- and long-term horizon, as we detail next.

Shorter-term causal analysis methodology and data

To measure the causal impact of advertisers that adopted SBv for the first time, we employed a machine learning causal inference methodology to determine the effect of taking an action on advertiser performance in a shorter term of one month. In an optimal experimental setup, for every advertiser that takes a particular action, we should have at least one otherwise identical advertiser twin that did not take that action and against which we can compare the results. Our algorithm is based on Van der Schaar and Alaa (2017) as well as Alaa and Van der Schaar’s (2018) proposed method called “causal multi-task Gaussian processes,” which estimates conditional average treatment effects and has competitive performance on various metrics (e.g., root mean square error, coverage) as compared with existing methodologies in causal inference, such as causal forests (Wager and Athey 2018) and propensity score matching (Austin 2011), when applied to observational data. Our algorithm builds on the idea of Gaussian processes in the context of multi-task learning for the estimation of individual treatment effects (Bonilla et al. 2008), and according to its properties and results, it is a suitable alternative for impact estimation studies. The algorithm generates adaptive weightsFootnote 2 that we use to construct a statistical twin for every treated sample; we then use those pairs to estimate the causal impact of adopting these ad products for the first time, as described in Pauwels et al. (2022).

Beginning with 78,766 advertisers in the US marketplace from December 2019 to November 2020, we were able to match 25,364 advertisers using this methodology. Therefore, the sample size is 25,364 (treated and non-treated) for the propensity score at the advertiser–brand level, meaning that we treat each advertiser and brand combination as a separate observation.

Long-term causal analysis: propensity score stratification

To measure sales and CTR impact in the longer term of a year, we have more data restrictions and thus a smaller sample size of statistically identical twins. We found that a propensity score stratification algorithm performs better in our long-term analysis with a relatively small sample and fewer features than the Gaussian processes algorithms employed in our short-term analysis. Specifically, we calculated the propensity scores for each brand based on ad spend, logarithm of total sales in the previous year, total units sold, total impressions, total clicks, and average selling price. Our response variables were the logarithm of CTR and natural logarithm of total YoY sales growth. Next, we binned the brands into 20 bins and included in our analysis only the bins whose treated and untreated groups have no significant differences in propensity scores. We observed a greater overlap of all metrics between the treated (adopted SBv) and untreated (did not adopt SBv) brands after matching with propensity score stratification, which suggests an improvement in similarity between both groups (Appendix 2). Finally, we calculated the weighted lift of success metrics between the two groups of treated and non-treated brands based on sample size. This procedure led to 915 brands with matched probabilities to adopt SBv, 419 of which actually did adopt SBv (treated) and 496 that did not (untreated). This sample size compares favorably with published propensity score matching sample sizes of 394 twins in Kumar et al. (2016) and 231 twins in Datta et al. (2018). Appendix 2 provides further details on the distributional characteristics.

Consumer-level video-watching characteristics

To assess the impact of video-watching characteristics, we used each consumer as its own control (an “identical twin”) and compared the consumer-level CTR by whether the consumers turned on sound, how long they watched, and how many video versus static ads they watched in the same month. The last element helps determine consumer fatigue; that is, consumers may become saturated with video ads if these ads represent a high share of those they watch in the channel. If this is the case, a novelty effect may explain the positive initial results for video in previous research (Study.com 2013; Gravetter and Forzano 2015), and thus these results would not hold up in situations in which video ads become the norm. To uncover novelty and consumer fatigue, we defined consumer video exposure as the percentage of video impressions viewed longer than 5 s with at least 50% of the pixels in the view, broken down by decile of the ratio of such video to the total of video and static impressions.

Our audiences were in 15 countries across the world, including the United States, Canada, the United Kingdom, Germany, France, Italy, Spain, Poland, Sweden, Japan, Singapore, United Arab Emirates, Saudi Arabia, Turkey, and Mexico, with the global sample size greater than 158 M (Appendix 1). We selected consumers who each had both static and video impressions during the same month, thus controlling for consumer differences (consumers exclusively exposed to one or the other format may differ in many other ways). We repeated this selection over the 12 rolling months, from May 1, 2021, to April 30, 2022. We calculated video exposure as the percentage share of video impressions of the same consumer in the same month. We calculated the video-to-static CTR ratio across all consumers with the same video exposure. To confirm that the video consumption was just not high by chance during this period. we repeated our analysis for an out-of-sample period of six months, from May 1, 2022 to October 31, 2022 as discussed in Results.

Results

Model-free evidence

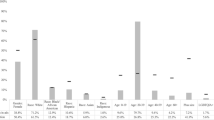

Before conducting the matching exercise to determine the set of advertisers that did and did not adopt SBv, we assessed the data for trends and found that ad spend had significantly increased YoY. As the study examined the period of COVID-19, the pandemic would have had an impact on the substantial rise in ad spend and also resulted in increased YoY sales growth for advertisers even without SBv. Before the matching exercise, we observed that the untreated group had approximately the same CTR as the treated group, but the number of treated impressions was almost 39 times that of untreated impressions, suggesting a far broader reach of the treated group and making our comparison not apples to apples. Moreover, in the case of YoY sales growth, we observed that the treated group (SBv advertisers) had a 135 times higher YoY sales growth than the untreated group. The treated advertisers not only increased their ad spend on Sponsored Products (SP) and Sponsored Brands (SB), but also adopted SBv. The matching exercise was therefore necessary for us to identify comparable sets of advertisers that did and did not adopt SBv in their advertiser portfolio, as we discuss subsequently. We also explored the distribution of consumer impressions by different levels of video and static ads exposure, as shown in Fig. 2.

We observed the video exposure of the same consumer in the same month; that is, we created decile buckets of consumers based on the share of video among the total video and static impressions of the same consumer. As Fig. 2 shows, the buckets’ weights by impressions were concentrated between 10 and 30%, with the maximum of 20%. This means that almost 90% of all consumers had a share of video impressions between 10 and 30%.

Causal analysis results

Returning to the brand-level study, as Fig. 3 shows, our shorter-term causal analysis demonstrated that brands that launched an SBv campaign for the first time had an average 21.3% increase in sales the following month, compared with those that did not. As Fig. 4 shows, our longer-term causal analysis demonstrated that brands that used SP + SB + SBv achieved higher average YoY sales growth of 10% (Fig. 4a) and higher CTR of 1.25 times (Fig. 4b) than brands that used SP + SB only.

Does this video advantage also show up in consumer-level data? Fig. 5 shows that the video-to-static CTR ratio of the same consumer in the same month was always positive, more than 4.8 over all periods and averaging 17 over 12 months. Indeed, SBv CTR was higher than static SB CTR in every market we analyzed (United States, Canada, United Kingdom, Germany, France, Italy, Spain, Poland, Sweden, Japan, Singapore, United Arab Emirates, Saudi Arabia, Turkey, and Mexico). Moreover, this higher CTR was consistent over time in each country market.

Video-to-static CTR ratio by a consumer exposure to video (logarithmic scales; see Appendix 1 for more details)

The average video-to-static CTR ratio was stable over 12 months, ranging between 16.7 and 17.9. It remained within the same range at 17.7 for our out-of-time test month ending May 31, 2022.Footnote 3 Moreover, we repeated our analysis for an out-of-sample period of six months, from October 1, 2021, to October 31, 2022, controlling for ad placements, and obtained similar results. This means that the average video CTR was 17 times the static CTR for a matching sample of more than 158 M global consumers exposed to both video and static ads during the same month with similar SB ad placements (Appendix 1). Note that we included only videos viewed longer than 5 s in Fig. 5.

Now that we established that brands using SBv had higher annual and monthly sales growth and CTR, which consumer video viewing features contributed to that CTR? Fig. 6 shows that sound-on videos achieved at least 3 times higher CTRs than muted videos, a difference significant at the p value < 1% for any viewed length.

We find that the benefits of a longer viewed length occur at different times for muted versus unmuted video ads. The muted CTR increased by an order of magnitude when the muted consumers viewed SBv for the first 5 s. By contrast, the unmuted (sound on) CTR increased only marginally for viewed lengths up to 75% but had a strong uptick when the consumer finished watching the full video.Footnote 4 We infer that consumers need at least 5 s of muted video to receive sufficient information to click through, while even a few seconds of unmuted video leads some consumers to do so, with a fully viewed video attracting the most interest in the offer.

Does limited exposure to unmuted videos (novelty effect) explain these results? We observe the sound-on benefits over the entire range of percentage exposure to unmuted versus muted video ads. Unmuted CTR ranged from 1.9 times that of muted CTR for consumers who completed more than 90% of unmuted videos to 3.9 times for consumers who completed less than 1% of unmuted videos. Thus, we find no evidence of consumer fatigue in CTR for sound-on videos.

Robustness checks

With the aim to stress-test our results, we considered consumer heterogeneity for video preference as well as video viewing of less than 5 s. First, are there any consumers who, all things being equal, prefer clicking on static over video ads? Such consumers exist, but they are a clear minority, at least for the placements of SB video and static ads in our analysis. For example, the consumers who were more than 25% likely to click on a static ad versus a video ad constituted less than 1% of the total consumers at all levels of video exposure. Note that our test compared video versus static for only one type of ads, SB. These ads had similar placements and content on the search page, and we controlled for SBv ad placements when comparing SBv with static SB ads. Still, it is possible that consumers who are more likely to click on static (vs. video) ads simply never click on SBv ads and strongly prefer clicking on other types of ads instead. Nevertheless, even in such a case, our results clearly indicate that the consumers who would ever click on SB ads strongly prefer clicking on video over static ads. We also conclude that the novelty effect of SBv ads cannot explain the higher video clicks at all levels of video exposure (Study.com 2013; Gravetter and Forzano 2015).

Second, we further noticed that a significantly higher video-to-static CTR ratio across all exposure levels and across all months was consistent only for videos viewed longer than 5 s. The video-to-static CTR ratio on SBv viewed between 0 and 5 s declined over 12 months for consumers with a high level of video exposure as the share of video impressions increased over time. Consumers exposed more than 50% to SBv viewed for 0 to 5 s had a video CTR that was similar to static CTR. As we expected, consumers who viewed videos for 0 s and were exposed to more than 50% of videos among their impressions had a reaction to SBv similar to their reaction to static ads, suggesting that these consumers did not get sufficient information about a video during the 0–5 s of watching. Also note that the Interactive Advertising Bureau standard is 2 s of exposure, and other industry standards only count 5 s of video exposure as viewable impressions.

The slope of CTR versus video exposure in Fig. 5 is slightly negative, and the deviations are partly due to rounding.Footnote 5 Appendix 1 provides more data on the consumer sample size.

Finally, the number of videos watched for 0 s heavily influenced traditional video CTR. This is one of the reasons that a 1.25 times increase in CTR for brands adopting SBv in Fig. 4b is below the 17 times video-to-static CTR ratio reported in Fig. 5. Our rationale is twofold: first, brands adopting SBv still employ a significant share of static SB impressions (Appendix 3), and second, a significant number of SBv impressions are exposed for 0 s, or less than 5 s.

Nevertheless, even a 1.25 times CTR increase after adopting a video format (see Fig. 4b) helps brands achieve an important performance improvement.

Discussion

This study combines the causal analysis of the short- and long-term performance difference between advertisers that do and do not use SBv ads and an exploratory analysis of how video ad viewing by millions of consumers is associated with their CTRs.

Our first contribution is to analytics practice. Regarding RQ1, in our causal analysis with a shorter-term time horizon of one month, we show that brands that launched an SBv campaign for the first time attained an average 21.3% increase in sales the following month, compared with brands that did not. Advertisers can therefore expect results within a month of investing in SBv. In the causal analysis with a longer-term time horizon of one year, we find that brands that used SP + SB + SBv achieved higher average YoY sales growth of 10% than brands that used SP + SB only without SBv.

With regard to RQ2, we show that consumers were 17 times more likely to click on video than static ads after a 5-s exposure, and consumer fatigue was not a factor even at more than 90% video exposure. For the same consumers, the average unmuted video CTR was 3.1 times that of muted. Moreover, CTR increased with the viewed video length for both muted and unmuted consumers. Finally, the muted CTR increased exponentially between 0 and 5 s, whereas the unmuted CTR increased only marginally between 0 and 5 s; the unmuted CTR was higher than the muted CTR at any viewed length. Considering these results, we recommend that, to increase their CTR and sales growth, brands should consider adding SBv to their media plans, allocating a greater share of their ad mix to SBv ad spend, and designing their creatives to encourage consumers to unmute their videos during the initial 5 s and to watch their videos longer than 5 s.

As to analytics research, our findings add to the growing literature on video ads effectiveness. Across many countries and ads, we find support for the superiority of video over static images (Appiah 2006; Chattington et al. 2009; Decker et al 2015). Our results are consistent with Media Richness theory (Daft and Lengel 1986), as the richer medium of video exerts much higher consumer influence than static images (Grgurovic2022; Semerádová and Weinlich 2020), especially when the sound is on—thus emerging the consumer in the full richness of the medium. In contrast, we do not observe any evidence of video overloading consumer cognition (Sweller 2005), as we find similar video benefits for consumer who predominantly see and hear video ads. As to viewed video length, we find different benefits for muted versus unmuted videos, hence unobserved in the literature (Shehu et al. 2016) show that high likability at the beginning and the end of a video ad is the most important. However, they do not investigate how this may differ for muted versus unmuted videos.

Would our findings generalize beyond Amazon Ads? We believe so. Facebook Databox (Dopson 2021) reports that (instream) video ads drive CTR 2 to 3 times higher than static imagery ads and result in better conversions, with 20% to 30% increases. Furthermore, Giordano et al. (2015) find that 57% of campaigns on Google have an average lift (increase) of 13% among audiences exposed to TrueView video ads compared with those exposed to static ads. Li and Lo (2015) demonstrate that instream video ads raise consumer awareness and consideration, enhancing brand recognition.

For viewed video length, Shehu et al. (2016) show that high likability at the beginning and end of a video ad is the most important. This result is directionally consistent with our finding that the first 5 s of an outstream video ad are crucial for CTR and that the unmuted CTR exhibits an uptick at full video completion. Moreover, recent research has shown specific techniques that increase CTR, such as the two-shot, a filmmaking technique in which two people appear in the frame (Yu et al. 2022). We conjecture that the two-shot technique might also encourage unmuting video ads and that the unmuted video ads in Yu et al.’s (2022) study might have also contributed to higher CTRs for the two-shot videos. Additional research is necessary to test these conjectures.

Finally, we acknowledge that our consumer-level CTR analysis is not causal and therefore does not answer the question of why the studied dimensions of video watching are related to a higher CTR. Were the consumers who watched video ads longer (see Fig. 6) further along the purchase funnel or more interested in buying to begin with? Or did they become more interested in the products after watching video ads longer? Likely all three explanations apply, and future research should tease out their importance. While we controlled for heterogeneity across consumers by keeping the consumer and month constant, we do not have information on different interest levels, purchase funnel stages, or consumer moods in that month. Experimental manipulation of the consumer goals and state would move theory forward on this matter.

Conclusion

Using a multi-method approach, we conclude that brands that adopted SBv on Amazon increased their sales in the short run and both their sales and CTR in the long run, compared with brands that used only sponsored ads without SBv. Digging deeper into the consumer-level data, we also observe that SBv engaged consumers more than static ads (17 times higher probability of clicking video ads instead of static ads after 5 s of viewing) and that unmuted SBv attracted more consumer attention and increased the average CTR 3.1 times. Therefore, we recommend that brands interested in improving their CTRs and sales growth should adopt SBv, consider allocating incremental budget to SBv, and employ professional video creation techniques to encourage consumers to unmute their videos during the initial 5 s and to watch their videos longer than 5 s.

This research has several limitations that suggest areas for future research. First, CTR depends on many additional factors, and we do not yet know why consumers unmute video ads. According to Semerádová and Weinlich (2020), 85% of Facebook videos are watched without sound. Moreover, we did not analyze the role of frequency of video ad exposure, nor the impact on advertising efficiency, i.e. Return on Advertising Investment (ROAS). Second, video ads likely have benefits beyond CTR as they generate awareness and consideration among potential customers. However, video ads also cost more to produce than static ads, so future research should examine the optimal allocation between static and video ads for brands in different conditions. Third, why are some brands and some creatives more successful in their video performance? Our description of distributional characteristic in Appendix 3 may inspire further inquiry.

All in all, regarding video ads in this new medium of Amazon Ads, SBvs rang up clicks and sales in both the short and long run. We hope our study sparks further development and research in this exciting frontier of advertising effectiveness.

Notes

The ratio of unmuted to muted CTR by viewed video length combines all levels of exposure to unmuted video. Regarding consumer fatigue, we observe that the unmuted-to-muted CTR ratio declines slightly at a high level of unmuted video exposure.

These adaptive weights result from the statistical similarities between treatment and control populations spanned by the 50+ features we used to account for confounding. The top confounders include the vertical (product category) of the advertiser, the number of enabled campaigns, trailing retail performance metrics, country of origin, and inventory position. Our algorithm generates a representation of the input features into a Kernel space and then uses those projections to generate an adaptive matching. Under this setting, each treated unit will have a match generated as a linear combination of non-treated units, with weights that are data-adaptive. Thus, every confounder that goes into the model contributes to the matching.

We tested the average and standard deviation of the video to static CTR ratio for each video exposure over 12 months. We then tested in month 13 whether the average ratio of video to static CTR was statistically the same in each exposure group. The test showed that the consumer video-to-static CTR ratio did not change over time at the 95% significance level.

All p values are below 0.01 for the findings discussed in this paragraph.

For example, a consumer with just four impression exposures can only end up with 0%, 25% (20% bin), 50%, 75% (70% bin), or 100% video exposure. For 90% or less than 10% exposure, a consumer needs at least 10 impressions, and for more than 90% exposure, a consumer needs at least 11 impressions and more likely 50 impressions. Such “low-frequency” consumers cause noise.

References

Alaa, A.M., and M. van der Schaar. 2018. Bayesian nonparametric causal inference: Information rates and learning algorithms. IEEE Journal of Selected Topics in Signal Processing 12 (5): 1031–1046.

Amazon. 2021. A guide to creating Sponsored Brands video ads. https://advertising.amazon.com/library/guides/getting-started-with-sponsored-brands-video.

Appiah, O. 2006. Rich Media, poor Media: The Impact of audio/video vs. text/picture testimonial ads on browsers’ evaluations of commercial web sites and online products. Journal of Current Issues and Research in Advertising 28 (1): 73–86.

Austin, P.C. 2011. An introduction to propensity score methods for reducing the effects of confounding in observational studies. Multivariate Behavioral Research 46 (3): 399–424.

Bagadia, D., and E. Quint. 2021. Inside Google Marketing: Everyone has been overlooking the mid-funnel. Even us. Think with Google, https://www.thinkwithgoogle.com/intl/en-154/consumer-insights/consumer-journey/mid-funnel-marketing-brand-performance.

Batra, R., and K.L. Keller. 2016. Integrating marketing communications: New findings, new lessons, and new ideas. Journal of Marketing 80 (6): 122–145.

Becker, M., T. Scholdra, M. Berkmann, and W. Reinartz. 2022. The effect of content on zapping in TV advertising. Journal of Marketing. https://doi.org/10.1177/00222429221105818.

Belanche, D., C. Flavián, and A. Pérez-Rueda. 2017. Understanding interactive online advertising: Congruence and product involvement in highly and lowly arousing, skippable video ads. Journal of Interactive Marketing 37: 75–88.

Bétrancourt, M., and B. Tversky. 2000. Effect of computer animation on users’ performance: A review/(Effet de l’animation sur les performances des utilisateurs: Une sythèse). Le Travail Humain 63 (4): 311.

Bonilla, E.V., K.M.A. Chai, and C.K.I. Williams. 2008. Multi-task Gaussian process prediction. In Advances in neural information processing systems, ed. N. Zhang and J. Tian, 153–160. Arlington, VA: AUAI Press.

Brentlinger, N. 2020. What is full-funnel marketing? Creating a funnel strategy. Amazon Ads, November 18, https://advertising.amazon.com/blog/what-is-full-funnel-marketing.

Campbell, C., F. Mattison Thompson, P.E. Grimm, and K. Robson. 2017. Understanding why consumers don’t skip pre-roll video ads. Journal of Advertising 46 (3): 411–423.

Chattington, M., Reed, N., Basacik, D., Flint, A., and Parkes, A. 2009. Investigating driver distraction: The effects of video and static advertising. Transport Research Laboratory; London. Report No. PPR409.

Chernozhukov, V., D. Chetverikov, M. Demirer, E. Duflo, C. Hansen, W. Newey, and J. Robins. 2018. Double/debiased machine learning for treatment and structural parameters. Econometrics Journal 21 (1): C1–C68.

Daft, R.L., and R.H. Lengel. 1986. Organizational information requirements, media richness and structural design. Management Science 32 (5): 554–571.

Dardis, F.E., M. Schmierbach, B. Sherrick, F. Waddell, J. Aviles, S. Kumble, and E. Bailey. 2016. Adver-Where? Comparing the effectiveness of banner ads and video ads in online video games. Journal of Interactive Advertising 16 (2): 87–100.

Datta, H., G. Knox, and B.J. Bronnenberg. 2018. Changing their tune: How consumers’ adoption of online streaming affects music consumption and discovery. Marketing Science 37 (1): 5–21.

Digital Marketing Trends. 2017. Digital Griffon experts on Hubspot and others. https://digitalgriffon.com/digital-marketing-trends-2017.

Decker, J.S., S.J. Stannard, B. McManus, S.M. Wittig, V.P. Sisiopiku, and D. Stavrinos. 2015. The impact of billboards on driver visual behavior: A systematic literature review. Traffic Injury Prevention 16: 234–239.

Dopson, E. 2021. Video vs. images: Which drives more engagement in Facebook ads? Databox, 28 June 28, https://databox.com/videos-vs-images-in-facebook-ads.

Giordano, M., C. O’Neil-Hart, and H. Blumenstein. 2015. YouTube TrueView. December, https://www.thinkwithgoogle.com/marketing-resources/online-video-ads-drive-consideration-favorability-purchase-intent-sales/.

Gravetter, F.J., and L.-A.B. Forzano. 2015. Research Methods for the Behavioral Sciences. Boston: Cengage Learning.

Grgurovic, Marta. 2022. Video vs image ads. Brid TV, August 3, https://www.brid.tv/video-vs-image-ads/.

Hegarty, M., S. Kriz, and C. Cate. 2003. The roles of mental animations and external animations in understanding mechanical systems. Cognition and Instruction 21 (4): 209–249.

Iacobucci, D., M. Petrescu, A. Krishen, and M. Bendixen. 2019. The state of marketing analytics in research and practice. Journal of Marketing Analytics 7: 152–181.

Kumar, A.B., R. Rishika, R. Janakiraman, and P.K. Kannan. 2016. From social to sale: The effects of firm-generated content in social media on customer behavior. Journal of Marketing 80 (1): 7–25.

Li, H., and H.-Y. Lo. 2015. Do you recognize its brand? The effectiveness of online instream video advertisements. Journal of Advertising 44 (3): 208–218.

Mayer, R.E., M. Hegarty, S. Mayer, and J. Campbell. 2005. When static media promote active learning: Annotated illustrations versus narrated animations in multimedia instruction. Journal of Experimental Psychology: Applied 11 (4): 256.

McBee, D. 2015. Why is video more expensive than display? Simplifi. https://simpli.fi/video-expensive-display/.

McGranaghan, M., J. Liaukonyte, and K.C. Wilbur. 2022. How viewer tuning, presence, and attention respond to ad content and predict brand search lift. Marketing Science 41 (5): 873–895.

Pauwels, K., S. Erguncu, and G. Yildirim. 2013. Winning hearts, minds and sales: How marketing communication enters the purchase process in emerging and mature markets. International Journal of Research in Marketing 30 (1):57–68.

Pauwels, K., M. Caddeo, and G. Schnaidt. 2022. Causal impact of digital display ads on advertiser performance. In Proceedings of the European Marketing Academy, 51st (108183): EMAC. http://proceedings.emac-online.org/pdfs/A2022-108183.pdf.

Pauwels, K., and Z. Shah. 2022. Does full-funnel online ads help grow customer base and brand sales? Causal inference at Amazon. Paper presented at Marketing Analytics Symposium (MASS); 24 May, Sydney, Australia. https://conference.unsw.edu.au/en/marketing-analytics-symposium-sydney-2022#symposium.

Qin, V., and K. Pauwels. 2023. Upper funnel ad effectiveness and seasonality in consumer durable goods. Applied Marketing Analytics: THe Peer-Reviewed Journal 8 (4): 345–352.

Roberts, J.H., and J.M. Lattin. 1997. Consideration: Review of research and prospects for future insights. Journal of Marketing Research 34 (3): 406–410.

Rogers, K., and M. Weber. 2019. Audio habits and motivations in video game players. In AM'19: Proceedings of the 14th international audio mostly conference: A journey in sound. New York: Association for Computing Machinery, 45–52.

Shah, Z. 2021. Sponsored Brands video helps increase sales and click-through rates. Amazon Advertising. https://advertising.amazon.com/en-us/library/research/combining-sponsored-brands-video-with-other-campaigns/.

Schnotz, W., J. Böckheler, and H. Grzondziel. 1999. Individual and co-operative learning with interactive animated pictures. European Journal of Psychology of Education 14: 245–265.

Schnotz, W., and M. Bannert. 2003. Construction and interference in learning from multiple representation. Learning and Instruction 13 (2): 141–156.

Semerádová, T., and P. Weinlich. 2020. The (in)effectiveness of in-stream video ads: Comparison of Facebook and YouTube. In Impacts of Online Advertising on Business Performance, ed. T. Semerádová and P. Weinlich, 200–225. Hershey, PA: IGI Global.

Shehu, E., T.H.A. Bijmolt, and M. Clement. 2016. Effects of likeability dynamics on consumers’ intention to share online video advertisements. Journal of Interactive Marketing 35: 27–43.

Shorter, J.D., and R.L. Dean. 1994. Computing in collegiate schools of business: Are mainframes and stand-alone microcomputers still good enough? Journal of Systems Management 45 (7): 36.

Srinivasan, S., M. Vanhuele, and K. Pauwels. 2010. Mind-set metrics in market response models: An integrative approach. Journal of Marketing Research 47 (4): 672–684.

Study.com. 2013. How novelty effects, test sensitization and measurement timing can threaten external validity. November 26. https://study.com/academy/lesson/threats-to-external-validity-ii-novelty-effects-test-sensitization-measurement-timing.html.

Sweller, J. 2005. Implications of cognitive load theory for multimedia learning. The Cambridge Handbook of Multimedia Learning 3 (2): 19–30.

Teads. 2015. Outstream digital video ad formats outperform traditional instream formats according to a Millward Brown digital study commissioned by Teads. August 13, https://www.teads.com/uk-outstream-digital-video-ad-formats-outperform-traditional-instream-formats-according-to-a-millward-brown-digital-study-commissioned-by-teads/.

Tversky, B., J.B. Morrison, and M. Betrancourt. 2002. Animation: Can it facilitate? International Journal of Human-Computer Studies 57 (4): 247–262.

Van der Schaar, M., and A.M. Alaa. 2017. Bayesian inference of individualized treatment effects using multi-task gaussian processes. US: Neural Information Processing Systems (NeurIPS).

Wager, S., and S. Athey. 2018. Estimation and inference of heterogeneous treatment effects using random forests. Journal of the American Statistical Association 113 (523): 1228–1242.

Yu, Y., Y. Wang, G. Zhang, Z. Zhang, C. Wang, and Y. Tan. 2022. Outstream video advertisement effectiveness. Working paper, University of Washington. https://doi.org/10.2139/ssrn.4098246

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing Interests

The authors declare that they have no conflict of interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendices

Appendix 1: Consumer sample sizes

Our sample for the consumer-level analysis is global, with more than 300 M consumers. Monthly sample size from May 1, 2021, to May 31, 2022, ranges from 158 to 328 M consumers. The out-of-sample validation monthly sample size from October 1, 2021, to October 31, 2022, ranges from 294 to 345 M consumers. The minimum sample size in Fig. 5 for a decile in a month was 6268 unique consumers, and the average sample size per decile in the same month was greater than 24 M consumers.

Appendix 2: Assessing the match between treated and control groups post–propensity score stratification

To determine the quality of the match between the treated and the control groups, the graphs in Fig. 7 show before and after the matching exercise. For each confounding variable, we show propensity scores before and after where there is a greater overlap of the treated and untreated sample sets, indicating a good match.

In addition, we conducted Mann–Whitney U tests with the aim to ensure that the medians of both the treated and untreated groups were close, indicating a good match. We also conducted t tests for each confounding variable for the treated and untreated sample sets to ensure that the means were close enough. For all confounding variables, we achieved a strong match given that the medians were close, and the means were also closely matched between the treated and untreated.

Appendix 3: Key distribution characteristics

First, our global sample contains 221,920 advertisers. All consumers are dispersed across advertisers with a low concentration index. The Herfindahl–Hirschman index of consumers by advertiser is 0.02%, whereas the maximum share of consumers by an advertiser is 0.5%, indicating that there are no dominant SB advertisers in our sample. Second, of the 221,920 advertisers using SB (from May 1, 2021, to April 30, 2022), 99,499 (45%) used static SB only, 81,033 (36%) used both SBv and static SB, and 41,388 (19%) used SBv only. Third, the simple average SBv CTR among the 81,033 advertisers using both SBv and static SB was 2.9 times the simple average static SB CTR with a paired t test p value less than 1e−6. Note that this simple average was across advertisers, not across consumers. This CTR included 0-s videos. This CTR result was only directional and could not be directly compared with either Fig. 5 or Fig. 4b. Fourth, 78,319 advertisers among the 81,033 advertisers using both SBv and static SB had more than 500 SBv impressions and at least one static SB click. Among these 78,319 advertisers, 94% (73,943 advertisers) had an SBv CTR greater than a static SB CTR, and the remaining 6% (4376 advertisers) had an SBv CTR less than a static SB CTR.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Pauwels, K., Peran, M., Shah, Z. et al. Sponsored brands video rings up clicks and sales in the short and long run. J Market Anal 11, 275–286 (2023). https://doi.org/10.1057/s41270-023-00237-3

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1057/s41270-023-00237-3