Abstract

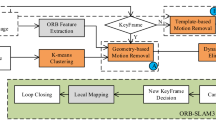

Most current research on dynamic visual Simultaneous Localization and Mapping (SLAM) systems focuses on scenes where static objects occupy most of the environment. However, in densely populated indoor environments, the movement of the crowd can lead to the loss of feature information, thereby diminishing the system’s robustness and accuracy. This paper proposes a visual SLAM algorithm for dense crowd environments based on a combination of the ORB-SLAM2 framework and RGB-D cameras. Firstly, we introduced a dedicated target detection network thread and improved the performance of the target detection network, enhancing its detection coverage in crowded environments, resulting in a 41.5% increase in average accuracy. Additionally, we found that some feature points other than humans in the detection box were mistakenly deleted. Therefore, we proposed an algorithm based on standard deviation fitting to effectively filter out the features. Finally, our system is evaluated on the TUM and Bonn RGB-D dynamic datasets and compared with ORB-SLAM2 and other state-of-the-art visual dynamic SLAM methods. The results indicate that our system’s pose estimation error is reduced by at least 93.60% and 97.11% compared to ORB-SLAM2 in high dynamic environments and the Bonn RGB-D dynamic dataset, respectively. Our method demonstrates comparable performance compared to other recent visual dynamic SLAM methods.

Article PDF

Similar content being viewed by others

Code or data availability

The Crowdhuman dataset [13], MOT dataset [14], TUM dataset [16], and Bonn RGB-D Dynamic DataSet [17] were used in our work.

References

Mur-Artal, R., Tardós, J.D.: Orb-slam2: an open-source slam system for monocular, stereo, and rgb-d cameras. IEEE Trans. Rob. 33(5), 1255–1262 (2017). https://doi.org/10.1109/TRO.2017.2705103

Campos, C., Elvira, R., Rodríguez, J.J.G., Montiel, J.M., Tardós, J.D.: Orb-slam3: an accurate open-source library for visual, visual-inertial, and multimap slam. IEEE Trans. Rob. 37(6), 1874–1890 (2021)

Bescos, B., Fácil, J.M., Civera, J., Neira, J.: Dynaslam: tracking, mapping, and inpainting in dynamic scenes. IEEE Robot. Autom. Lett. 3(4), 4076–4083 (2018). https://doi.org/10.1109/LRA.2018.2860039

Li, S., Lee, D.: Rgb-d slam in dynamic environments using static point weighting. IEEE Robot. Autom. Lett. 2(4), 2263–2270 (2017). https://doi.org/10.1109/TMC.2019.2944829

Zhang, T., Zhang, H., Li, Y., Nakamura, Y., Zhang, L.: Flowfusion: dynamic dense rgb-d slam based on optical flow. In: 2020 IEEE International Conference on Robotics and Automation (ICRA), pp. 7322–7328 (2020). IEEE. https://doi.org/10.1109/ICRA40945.2020.9197349

Cui, L., Ma, C.: Sof-slam: a semantic visual slam for dynamic environments. IEEE Access 7, 166528–166539 (2019). https://doi.org/10.1109/ACCESS.2019.2952161

Yu, C., Liu, Z., Liu, X.-J., Xie, F., Yang, Y., Wei, Q., Fei, Q.: Ds-slam: a semantic visual slam towards dynamic environments. In: 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1168–1174 (2018). IEEE. https://doi.org/10.1109/IROS.2018.8593691

Badrinarayanan, V., Kendall, A., Cipolla, R.: Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 39(12), 2481–2495 (2017). https://doi.org/10.1109/TPAMI.2016.2644615

He, K., Gkioxari, G., Dollár, P., Girshick, R.: Mask r-cnn. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2961–2969 (2017)

Zhong, Y., Hu, S., Huang, G., Bai, L., Li, Q.: Wf-slam: a robust vslam for dynamic scenarios via weighted features. IEEE Sens. J. (2022). https://doi.org/10.1109/JSEN.2022.3169340

Soares, J.C.V., Gattass, M., Meggiolaro, M.A.: Crowd-slam: visual slam towards crowded environments using object detection. J Intell. Robot. Syst. 102(2), 1–16 (2021)

Bochkovskiy, A., Wang, C.-Y., Liao, H.-Y.M.: Yolov4: Optimal speed and accuracy of object detection. arXiv:2004.10934 (2020)

Shao, S., Zhao, Z., Li, B., Xiao, T., Yu, G., Zhang, X., Sun, J.: Crowdhuman: a benchmark for detecting human in a crowd. arXiv:1805.00123 (2018). https://doi.org/10.48550/arXiv.1805.00123

Dendorfer, P., Rezatofighi, H., Milan, A., Shi, J., Cremers, D., Reid, I., Roth, S., Schindler, K., Leal-Taixé, L.: Mot20: A benchmark for multi object tracking in crowded scenes. arXiv:2003.09003 (2020). https://doi.org/10.48550/arXiv.2003.09003

Torr, P.H., Zisserman, A.: Mlesac: a new robust estimator with application to estimating image geometry. Comput. Vis. Image Underst. 78(1), 138–156 (2000)

Sturm, J., Engelhard, N., Endres, F., Burgard, W., Cremers, D.: A benchmark for the evaluation of rgb-d slam systems. In: 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 573–580 (2012). IEEE. https://doi.org/10.1109/IROS.2012.6385773

Palazzolo, E., Behley, J., Lottes, P., Giguere, P., Stachniss, C.: Refusion: 3d reconstruction in dynamic environments for rgb-d cameras exploiting residuals. In: 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 7855–7862 (2019). IEEE. https://doi.org/10.1109/IROS40897.2019.8967590

Liu, Y., Miura, J.: Rds-slam: real-time dynamic slam using semantic segmentation methods. IEEE Access 9, 23772–23785 (2021). https://doi.org/10.1109/ACCESS.2021.3050617

Sun, L., Wei, J., Su, S., Wu, P.: Solo-slam: a parallel semantic slam algorithm for dynamic scenes. Sensors 22(18), 6977 (2022). https://doi.org/10.3390/s22186977

Acknowledgements

This work was supported by the National Natural Science Foundation of China (Grant Nos. 61703040 and 61603047) and the Teacher Recruitment and Support Plan of Beijing Information Science & Technology University (Grant No. 5029011103). The authors thank Juan Dai for her technical assistance with the experiments and Zhong Su and Cui Zhu for their insightful suggestions throughout the study. The authors also express their gratitude to the anonymous reviewers for their valuable comments and suggestions that helped improve the quality of this paper.

Author information

Authors and Affiliations

Contributions

Jianfeng Li served as the first author and completed the entire creative process. Dai Juan acted as the corresponding author, providing guidance and revising the manuscript. Zhong Su and Cui Zhu contributed to the revision of the manuscript as assisting authors.

Corresponding author

Ethics declarations

Conflicts of interest/Competing interests

Not applicable

Ethics approval

Not applicable

Consent to participate

Not applicable

Consent for publication

Not applicable

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Li, J., Dai, J., Su, Z. et al. RGB-D Based Visual SLAM Algorithm for Indoor Crowd Environment. J Intell Robot Syst 110, 27 (2024). https://doi.org/10.1007/s10846-023-02046-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10846-023-02046-3