Abstract

We study the training of deep neural networks by gradient descent where floating-point arithmetic is used to compute the gradients. In this framework and under realistic assumptions, we demonstrate that it is highly unlikely to find ReLU neural networks that maintain, in the course of training with gradient descent, superlinearly many affine pieces with respect to their number of layers. In virtually all approximation theoretical arguments which yield high order polynomial rates of approximation, sequences of ReLU neural networks with exponentially many affine pieces compared to their numbers of layers are used. As a consequence, we conclude that approximating sequences of ReLU neural networks resulting from gradient descent in practice differ substantially from theoretically constructed sequences. The assumptions and the theoretical results are compared to a numerical study, which yields concurring results.

Article PDF

Similar content being viewed by others

References

Arridge, S., Maass, P., Öktem, O., Schönlieb, C.-B.: Solving inverse problems using data-driven models. Acta Numer. 28, 1–174 (2019)

Bachmayr, M., Kazeev, V.: Stability and preconditioning of elliptic PDEs with low-rank multilevel structure. Found. Comput. Math. 20, 1175–1236 (2020)

Bhattacharya, K., Hosseini, B., Kovachki, N.B., Stuart, A.M.: Model reduction and neural networks for parametric PDEs. arXiv:2005.03180 (2020)

Boche, H., Fono, A., Kutyniok, G.: Limitations of deep learning for inverse problems on digital hardware. arXiv:2202.13490 (2022)

Bölcskei, H., Grohs, P., Kutyniok, G., Petersen, P.C.: Optimal approximation with sparsely connected deep neural networks. SIAM J. Math. Data Sci. 1, 8–45 (2019)

Colbrook, M.J., Antun, V., Hansen, A.C.: The difficulty of computing stable and accurate neural networks: on the barriers of deep learning and Smale’s 18th problem. Proc. Natl. Acad. Sci. 119(12), e2107151119 (2022)

W. E., Yu, B.: The deep Ritz method: a deep learning-based numerical algorithm for solving variational problems. Commun. Math. Stat. 6(1), 1–12 (2018)

Goodfellow, I., Bengio, Y., Courville, A.: Deep Learning. MIT Press (2016)

Grohs, P., Hornung, F., Jentzen, A., Von Wurstemberger, P.: A proof that artificial neural networks overcome the curse of dimensionality in the numerical approximation of Black-Scholes partial differential equations. Memoirs of the American Mathematical Society (2020)

Gupta, S., Agrawal, A., Gopalakrishnan, K., Narayanan, P.: Deep learning with limited numerical precision. In: International Conference on Machine Learning, pp. 1737–1746. PMLR (2015)

Gühring, I., Kutyniok, G., Petersen, P.C.: Error bounds for approximations with deep ReLU neural networks in \({W}^{s,p}\)- norms. arXiv:1902.07896 (2019)

Han, J., Jentzen, A., W. E. Solving high-dimensional partial differential equations using deep learning. Proc. Natl. Acad. Sci. 115(34), 8505–8510 (2018)

Han, S., Mao, H., Dally, W.J.: Deep compression: Compressing deep neural networks with pruning, trained quantization and huffman coding. arXiv:1510.00149 (2015)

Hanin, B., Rolnick, D.: Complexity of linear regions in deep networks. In: International Conference on Machine Learning, pp. 2596–2604. PMLR, (2019)

He, J., Li, L., Xu, J., Zheng, C.: ReLU deep neural networks and linear finite elements. arXiv:1807.03973 (2018)

He, K., Zhang, X., Ren, S., Sun, J.: Delving deep into rectifiers: surpassing human-level performance on imagenet classification. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1026–1034. (2015)

Higham, N.J.: Accuracy and stability of numerical algorithms. Society for Industrial and Applied Mathematics, second edition, (2002)

Hubara, I., Courbariaux, M., Soudry, D., El-Yaniv, R., Bengio, Y.: Quantized neural networks: training neural networks with low precision weights and activations. J. Mach. Learn. Res. 18(1), 6869–6898 (2017)

Jacob, B., Kligys, S., Chen, B., Zhu, M., Tang, M., Howard, A., Adam, H., Kalenichenko, D.: Quantization and training of neural networks for efficient integer-arithmetic-only inference. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2704–2713. (2018)

Jin, K.H., McCann, M.T., Froustey, E., Unser, M.: Deep convolutional neural network for inverse problems in imaging. IEEE Trans. Image. Process. 26(9), 4509–4522 (2017)

Kazeev, V., Oseledets, I., Rakhuba, M., Schwab, C.: QTT-finite-element approximation for multiscale problems I: model problems in one dimension. Adv. Comput. Math. 43(2), 411–442 (2017)

Kazeev, V., Oseledets, I., Rakhuba, M.V., Schwab, C.: Quantized tensor FEM for multiscale problems: diffusion problems in two and three dimensions. Multiscale Model. Simul. 20(3), 893–935 (2022)

Kazeev, V., Schwab, C.: Quantized tensor-structured finite elements for second-order elliptic PDEs in two dimensions. Numer. Math. 138, 133–190 (2018)

Krizhevsky, A., Sutskever, I., Hinton, G.: Imagenet classification with deep convolutional neural networks. In: Adv. Neural Inf. Process. Syst. 25, pp. 1097–1105. Curran Associates, Inc., (2012)

Kutyniok, G., Petersen, P., Raslan, M., Schneider, R.: A theoretical analysis of deep neural networks and parametric pdes. Constr. Approx. 55(1), 73–125 (2022)

LeCun, Y., Bengio, Y., Hinton, G.: Deep learning. Nature 521(7553), 436–444 (2015)

Li, H., Schwab, J., Antholzer, S., Haltmeier, M.: Nett: solving inverse problems with deep neural networks. Inverse Probl. 36(6), 065005 (2020)

Li, Z., Ma, Y., Vajiac, C., Zhang, Y.: Exploration of numerical precision in deep neural networks. arXiv:1805.01078 (2018)

Lu, L., Jin, P., Pang, G., Zhang, Z., Karniadakis, G.E.: Learning nonlinear operators via deeponet based on the universal approximation theorem of operators. Nat. Mach. Intell. 3(3), 218–229 (2021)

Marcati, C., Opschoor, J.A., Petersen, P.C., Schwab, C.: Exponential ReLU neural network approximation rates for point and edge singularities. arXiv:2010.12217 (2020)

Marcati, C., Rakhuba, M., Schwab, C.: Tensor rank bounds for point singularities in \(\mathbb{R} ^3\). Adv. Comput. Math. 48(3), 17–57 (2022)

Nemirovski, A., Juditsky, A., Lan, G., Shapiro, A.: Robust stochastic approximation approach to stochastic programming. SIAM J. Optim. 19(4), 1574–1609 (2009)

Nemirovsky, A.S., Yudin, D.B.: Problem complexity and method efficiency in optimization. Wiley-Interscience Series in Discrete Mathematics. Wiley, (1983)

Ongie, G., Jalal, A., Metzler, C.A., Baraniuk, R.G., Dimakis, A.G., Willett, R.: Deep learning techniques for inverse problems in imaging. IEEE J. Sel. Areas Inf. Theory 1(1), 39–56 (2020)

Opschoor, J., Petersen, P., Schwab, C.: Deep ReLU networks and high-order finite element methods. Anal. Appl. 18, 12 (2019)

Petersen, P., Raslan, M., Voigtlaender, F.: Topological properties of the set of functions generated by neural networks of fixed size. Found. Comput. Math. 21(2), 375–444 (2021)

Petersen, P., Voigtlaender, F.: Optimal approximation of piecewise smooth functions using deep ReLU neural networks. Neural Netw. 108, 296–330 (2018)

Pineda, A.F.L., Petersen, P.C.: Deep neural networks can stably solve high-dimensional, noisy, non-linear inverse problems. arXiv:2206.00934 (2022)

Raissi, M., Perdikaris, P., Karniadakis, G.E.: Physics-informed neural networks: a deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. J. Comput. Phys. 378, 686–707 (2019)

Schwab, C., Zech, J.: Deep learning in high dimension: neural network expression rates for generalized polynomial chaos expansions in UQ. Anal. Appl. 17(01), 19–55 (2019)

Senior, A.W., Evans, R., Jumper, J., Kirkpatrick, J., Sifre, L., Green, T., Qin, C., Žídek, A., Nelson, A.W., Bridgland, A.: Improved protein structure prediction using potentials from deep learning. Nature 577(7792), 706–710 (2020)

Shaham, U., Cloninger, A., Coifman, R.R.: Provable approximation properties for deep neural networks. Appl. Comput. Harmon. Anal. 44(3), 537–557 (2018)

Shalev-Shwartz, S., Ben-David, S.: Understanding Machine Learning: From Theory to Algorithms. Cambridge University Press, (2014)

Silver, D., Huang, A., Maddison, C.J., Guez, A., Sifre, L., Van Den Driessche, G., Schrittwieser, J., Antonoglou, I., Panneershelvam, V., Lanctot, M.: Mastering the game of go with deep neural networks and tree search. Nature 529(7587), 484–489 (2016)

Sun, Y., Lao, D., Sundaramoorthi, G., Yezzi, A.: Surprising instabilities in training deep networks and a theoretical analysis. arXiv:2206.02001 (2022)

Telgarsky, M.: Representation benefits of deep feedforward networks. arXiv:1509.08101 (2015)

Yarotsky, D.: Error bounds for approximations with deep ReLU networks. Neural Netw. 94, 103–114 (2017)

Yarotsky, D.: Optimal approximation of continuous functions by very deep ReLU networks. In: Conference on Learning Theory, pp. 639–649. PMLR, (2018)

Zhou, S.-C., Wang, Y.-Z., Wen, H., He, Q.-Y., Zou, Y.-H.: Balanced quantization: an effective and efficient approach to quantized neural networks. J. Comput. Sci. Technol. 32(4), 667–682 (2017)

Funding

Open access funding provided by University of Vienna. VK was partially supported by the Austrian Science Fund (FWF) under project F65 “Taming Complexity in Partial Differential Systems”.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no competing interests.

Additional information

Communicated by: Siddhartha Mishra

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

1.1 A.1 Example of unstable neural network

Here, we introduce the notion of a finite precision realisation of a given neural network \(\Phi \) and give an example of how numerical instability can affect such NNs. We assume the floating-point addition and multiplication to be defined according to (1.2), where the minima are well defined since \(\mathbb {M}\) has no accumulation points. Further, we consider the floating-point realisation of matrix-vector multiplication that is given by

for any matrix \(A \in \mathbb {R}^{m\times n}\) and a vector \(b \in \mathbb {R}^n\) with \(m,n\in \mathbb {N}\), where \(j = 1, \dots m\) and the summation is performed from left to right. Next, we define the finite precision realisation of a neural network.

Definition A.1

Let \(\mathbb {M} \subset \mathbb {R}\) be a set without accumulation points. For a neural network \(\Phi \) with input dimension \(d\in \mathbb {N}\), \(L \in \mathbb {N}\) layers, output dimension \(N_L \in \mathbb {N}\) and an activation function \(\varrho _{\mathbb {M}}:\mathbb {M} \rightarrow \mathbb {M}\), we define the finite precision realisation by

where \(x^{(L)}\) is defined as

Remark A.2

Observe that in this section we only require the operations of the NNs to be elements of \(\mathbb {M}\) but not the weights. This greatly simplifies the mathematical argument while still allowing NNs to exhibit instabilities.

Having defined finite precision operations we continue by presenting a neural network \(\Phi \) such that for every non-negative input x it holds that

This implies that the relative error of passing to the finite precision network is 1.

To illustrate numerical instability, we introduce the following neural network \(\Phi \): Let \(a \ge 1\), \(N, L \in \mathbb {N}\) and

and

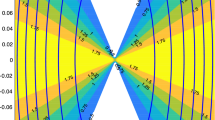

Furthermore, all biases satisfy \(b_{\ell } = 0\) for \(\ell =1, \dots , L\). We call the neural network defined above \(\Phi _{a, N, L}\), an illustration is provided below in Fig. 7.

Proposition A.3

Let \(a = 2^r \ge 1\) for \(r \in \mathbb {Z}\) and let \(\mathbb {M}\) be as in (1.1), with \(e_{\min } \le 0, e_{\max } > 53+r\), \(p= 53\) and hence \(\epsilon = 2^{-53}\). Furthermore, let \(\varrho (x) = \max \{0,x\}\) and \(\varrho _{\mathbb {M}}(z):= \varrho (z)\) for all \(z \in \mathbb {M}\). Additionally, let \(L\in \mathbb {N}\) and \(N \in \mathbb {N}\) be such that \((L-3)\log _{2}(N-1) + (L-1)\log _{2}(a-2\epsilon ) \ge 54\), then the neural network \(\Phi _{a, N,L}\) as defined above satisfies

for all \(x \in \mathbb {M}\) such that \(x \ge 0\).

Proof

It follows from the definition of the NN \(\Phi _{a, N,L}\) that \(\textrm{R}(\Phi )(x) = x\). Thus, to prove the theorem we have to show that

for all non-negative x. Let, for \(x\ge 0\), \(x^{(0)}, \dots , x^{(L)}\) be as in (A.1). The construction of \(\Phi _{a, N,L}\) implies that \(x^{(L)} = 0\) if \((x^{(L-1)})_1 = (x^{(L-1)})_2\). Additionally, \((x^{(L-1)})_1 = (x^{(L-1)})_2\) follows if \((x^{(L-2)})_1 < a (x^{(L-2)})_2 \epsilon /2\).

As a consequence, we have to find a lower bound of \((x^{(L-2)})_2\). To begin with, we observe that for \(x \in \mathbb {M}\) the multiplication with a is exact and thus \((x^{(2)})_k = a x\) for all \(k = 2,\dots , N\). Also, we observe that for \(j = 2, \dots , L-3\) it holds for \(k\in \{2, \dots , n\}\) that

This inequality results from the assumption that in the summation of each row of \(A_j\) we create \((N-2)\) times an error at most of size \(\epsilon \cdot \max _{i \in \{2, \ldots , N-1\}}(x^{(j-1)})_i\). This leads to

and

for all \(k \in \{2,\dots , N\}\). Due to the construction of our NN, we also have that \((x^{(L-2)})_1 = x\).

Thus, the inequality \((x^{(L-2)})_1 < a \, (x^{(L-2)})_2 \, \frac{\epsilon }{2}\) holds if

Note that

and hence, it suffices to show that the right-hand side of (A.3) is bounded below by \(\epsilon ^{-1} = 2^{53}\). Taking logarithms implies that this inequality is implied by

which completes the proof.\(\square \)

1.2 A.2 Auxiliary results

1.2.1 A.2.1 Proof of Lemma 3.1

Proof

We denote the affine pieces of h on [0, c] by \((I_{p})_{p = 1}^P\). Here we choose the pieces as semi-open intervals \(I_{p} = (r_p, s_p]\) so that they are disjoint. Moreover, we choose \(x_p \in I_p\) for \(p = 1, \dots , P\). We claim that

To prove this, we consider the auxiliary function

Note that for \(x \in [0,1]\) either \(\#\{z \in [0, x] :h(z) = h(x) \} \le P\) or \(\#\{z \in [0, x] :h(z) = h(x) \} = \infty \), and hence \(\tilde{h}\) is well defined. We have by assumption that

If we equip \(A \times (\{1, \dots , P\} \cup \{\infty \})\) with the measure \(\lambda \times \nu \), where \(\lambda \) is the Lebesgue and \(\nu \) is the counting measure, then we conclude by the monotonicity of measures that

It is easy to see that

If \(h'(x_p) \ne 0\) on \(I_p\), then the right-hand side of (A.5) equals \(\lambda (h(I_p))\). If \(h'(x_p) = 0\), then the right-hand side of (A.5) is 0 and by the non-negativity of the Lebesgue measure the left-hand side is 0 as well. We conclude that

By the subadditivity of measures and (A.6), we have that

which yields (A.4). Finally, by standard properties of the Lebesgue measure under Lipschitz maps, we have that

where we used that \(I_p\) are disjoint. \(\square \)

1.2.2 A.2.2 Proof of Proposition 3.3

Proof

Part 1: (Proof of (3.1)) Let \(\kappa \subset [0,1]^d\) be a straight line. Recalling equation (2.5), we observe that

Inserting \(\hat{u}_j^b=(I+\varepsilon _j\Theta _j^b)u_j^b\) from equation (2.4) leads to

Let us introduce for \(k \in \{1, \ldots , N_j\}\) the random variable

and observe that

since the number of breakpoints added after applying the activation function is less or equal than the number of \(x \in \kappa \) satisfying \((\widehat{{\textbf {A}}}_{j}\mathbbm {1}_{\hat{\eta }_{j-1}\ge 0}\hat{\eta }_{j-1}(x)+{\textbf {b}}_j)_k+(\theta _{j})_k= 0\). Per Definition 2.3, we have that \(\widehat{{\textbf {A}}}_{j}\), \(\hat{\eta }_{j-1}\), and \(\theta _{j}\) are independent random variables which allows the following computation for \(q \in \mathbb {N}\)

By Assumption 2.7 we have that for all \(x \in \Omega \)

We pick \(v, w \in \mathbb {R}^d\) with \(\Vert v\Vert = 1\) such that \(\kappa = \{tv + w :t \in [0, \mathcal {L}(\kappa )] \}\) where \(\mathcal {L}(\kappa )\) is the length of \(\kappa \). Next, we define

Then, by the chain rule and (A.8), we have that \(\Vert h'\Vert _\infty \le 1\). Moreover,

Since by construction \((\theta _{j})_k\) is uniformly distributed on the interval \([-\lambda \varepsilon _j |(u_j^b)_k|/2,\) \( \lambda \varepsilon _j |(u_j^b)_k|/2]\), we conclude from Remark 3.2 that

applied to (A.7) yields (3.1).

Part 2: (Proof of (3.2)) We recall the following upper bound on the total number of pieces of the realisation of an arbitrary NN:

Theorem A.4

([46]) Let \(L \in \mathbb {N}\) and \(\sigma \) be piecewise affine with p pieces. Then, for every NN \(\Phi \) with \(d=1, N_L=1\) and \(N_1, \ldots , N_{L-1} \le N\), we have that \(\textrm{R}(\Phi )\) has at most \((pN)^{(L-1)}\) affine pieces.

Invoking Theorem A.4 results in \(\max _{k}\{\# \left( \hat{\omega }_{j,k} \setminus \omega _{j,k} \right) \} \le N^{j-1}\) for \(j = 1, \dots , L\). We observe that

Next, we invoke (3.1) and obtain that

where we used that \(e \le 3 \le N\). This completes the proof of (3.2).\(\square \)

1.3 A.3 Proof of Theorem 3.4

Proof

Part 1: We start by proving the result for \(j' = L\). Using the notation of Proposition 3.3, we start by assuming that

if \(\omega _{j,k}\) is chosen as \(\omega _{j,k} = \bigcup _{k \in N_{j-1}} \hat{\omega }_{j-1,k}\) for \(j > 1\) and \(\omega _{1,k} = \varnothing \).

We will prove (A.9) at the end this step of this proof to not distract from the main argument. By (A.9) and the linearity of the expected value, we obtain,

By Assumption 2.6, it holds that if \((u_j^b)_k = 0\), then \(\textrm{R}((\widehat{\Phi }^\varepsilon )_j)_k = 0\) and therefore \(\# \left( \hat{\omega }_{j,k} \setminus \omega _{j,k}\right) = 0\). Hence, invoking Proposition 3.3, we conclude, using Assumption 2.6, that

We complete the proof of the first part by showing (A.9). Note that since

we have that

where we use that a piecewise affine function on a line has exactly one more affine piece than the smallest number of distinct points where the function is not affine.

We will show by induction over L that

which then yields the claim. For \(L = 2\), the claim follows directly by the choice \(\omega _{1,k} = \varnothing \).

Now let \(L > 2\), then

since \(\omega _{L-1,1} = \omega _{L-1,k}\) for all \(k \in N_{L-1}\) by definition. We have that

and hence

Using (A.13) and applying the induction hypothesis (A.11) to (A.12), we obtain that

which yields (A.11).

Part 2: Let \(j'>0\). We have that

where

For \(q, p \in \mathbb {N}\) and two functions \(f: \kappa \rightarrow \mathbb {R}^q\) and \(g:\mathbb {R}^q \rightarrow \mathbb {R}^p\) such that f has \(r \in \mathbb {N}\) affine pieces on \(\kappa \), and g has at most \(s\in \mathbb {N}\) many pieces along all possible lines it holds that \(g \circ f :\kappa \rightarrow \mathbb {R}^q\) has at most rs affine pieces, since on the image of each affine piece of f no more than s affine pieces can be generated by g. Note that \(\varrho :\mathbb {R}^{N_{j'}} \rightarrow \mathbb {R}^{N_{j'}}\) has at most 2N, pieces along each line, since every coordinate of a line can change sign at most once. Invoking again Proposition A.4 as well as Part 1 of this proof and the monotonicity of the expected value therefore yields that

which completes the proof.\(\square \)

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Karner, C., Kazeev, V. & Petersen, P.C. Limitations of neural network training due to numerical instability of backpropagation. Adv Comput Math 50, 14 (2024). https://doi.org/10.1007/s10444-024-10106-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10444-024-10106-x